Home » Analysis

Category Archives: Analysis

#UONCriminologyClub: Introduction to Criminology with Dr Manos Daskalou

In celebration of the 25 years of Criminology at UON, we have been hosting a number of events that demonstrate the diversity and reach criminology has as a discipline in different communities. In a spirit of opening a wider dialogue we have created a series of online classes for young home educated learners (10-15) to provide some taster sessions about criminology. This is a reflection of the very first one.

Setting up a session for young learners is not an easy feat! The introduction session was about to set the tone with the newly formed “Criminology Club” like the old Micky Mouse Club, only with more crime and less mice! The audience of our new crime-busters was ready to engage. The pre-session activity was set and the tone for what was to follow was clear. For an hour I would be conversing on crime. To get through the initial introductions with the group, we went over the activity. Top crimes and reasons for arranging them in that order. Our learners went into a whole range of criminalities and provided their own rationale for what they thought made them serious. There is a complex simplicity in this activity; regardless of age or experience, our understanding and most importantly justification of crime, tells us more about us, than the person committing it. Once we were done with the “pleasantries” we moved into the main part of the class.

Being an introductory session, it was important to set it right; telling a story and framing it into a conversation is important. What’s the best way to start the story of crime, but to tell a story we all know about when growing up; a fairy-tale. Going for a classic fairy-tale seemed to be the best way to go!

For this session the fairy tale chosen was Cinderella.

“I really enjoyed today’s session! I feel enlightened – Dr @manosdaskalou was great and I really loved the activities. I didn’t know the original story of Cinderella – it’s so horrifying. I didn’t think of crime in fairy-tales before but now I will be on the look out.” (Quinn age 12).

The original tale, like most fairy-tales has a fairly brutal twist that reinforces strongly the cautionary tale within the story. This was an audience participation narration and the help of the “crime-busters” was necessary every step of the way. Understanding the types of crimes being committed at every turn of the tale, while wondering if this was to be regarded appropriate behaviour now. Suddenly the fairy tale becomes an archive of social trends, beliefs and actions, captioned into the spin of the story. The hour was far too little time covering a simple fairy tale!

“I would like to thank Dr @manosdaskalou for today. I had an amazing time. The only thing I didn’t like was when it ended. I like stories so I enjoyed when we talked about Cinderella, I didn’t realise how gruesome the original one was!” (Paisley age 10).

There is something interesting running over a familiar tale and looking at it from a different perspective. The process of decoding messages and reviewing narratives. For a younger audience the terms may sound incomprehensible but it is amazing how much narrative analysis the new “crime-busters” did! Our social conventions are so complex yet despite that a child at the age of 10 can pick them up and put them in the right order. Seeing them confronting the different dilemmas, the story took them on so many different levels, was an interesting process. It is always a challenge to pitch any material at the right level but on this occasion, for this group, about this story in this instance, the “crime-busters” were introduced to Criminology!

“We had so much fun today in our first criminology lesson with Dr @manosdaskalou from UON. Time flew by so quickly, I was so interested in everything we were discussing and wanted to know more and more. In today’s session we pulled apart the fairytale Cinderella discussing what crimes the characters in it had committed and why. I thought this was a really great idea. I was having so much fun in the lesson that I didn’t realise how much I was actually learning but now that we have finished I realise I know much more about criminology and how to study a classic text with Criminology in mind. A big thank you to @manosdaskalou who made it an incredibly fun and engaging session. I’m sure I speak for most of us when I say I can’t wait to come back next time and learn more.” (Atty aged 14).

The end of the session left the group of “crime-busters” wanting more. Other colleagues will continue offering more sessions to an early generation of learners getting to know the basics about “Criminology” a discipline that many people think they know from true crime, little realising we spend so much time dispelling the myths! Who would imagine that the best way to do so, was to tell them a fairy tale.

Reflecting on Adolescence

This short series from Netflix has proven to be a national hit, as it rose to be the #1 most streamed programme on the platform in the UK. It has become a popular talking point amongst many viewers, with the programme even reaching into parliament and having praise from the government. After watching it, I can say that it is deserving of its mass popularity, with many aspects welcoming it to my interests.

It is not meant to be an overly dramatised show as we see from other programmes on Netflix. Whilst it fits in the genre of “Drama” it mainly serves itself as a message and portrayal of how toxic masculinity takes form at a young age. One episode was an hour long interrogation that became difficult to watch as it felt as if I was in the room myself, seeing a young boy turn from being vulnerable and scared to intimidating, aggressive and manipulative. As a programme, it does its job of engagement, but its message was displayed even better. Our society has a huge problem with perceptions of masculinity and how young men are growing up in a world that normalises misogyny. The microcosm that Adolescence shows encapsulates this problem well and highlights the problem of the “manosphere” that many young men and even children are turning to as they become radicalised online.

Commentators such as Andrew Tate have become a huge idol to his followers, which are often labelled as “incels”. Sine his rise in popularity in past years, an epidemic of these so called manosphere followers perpetuate misogyny in every corner of their lives, following and believing tales like the “80-20 rule” in which 80% of women are attracted to 20% of men. This kind of mindset is extremely dangerous and, as displayed in Jamie’s behaviour, leads to a feeling of necessity in regard to women liking them. This behaviour isn’t exactly new; it is a form of misogyny that has plagued society for as long as society has been around, however it has been perpetuated further by the “Commentaters”, as I call them.

As a fan of the Silent Hill series, I have always enjoyed stories that dive deep into the psyche and explore wider themes in ways that make the audience uncomfortable, yet willing, to confront. Adolescence does this in the form of a show not so disguised as an overarching message. I feel like it has done its job of making people reflect and critically think about what is wrong with society, and exposing those who do not think about the wider messages and only care about entertainment. I mean, people sit and question whether or not Jamie did the crime and argue that he is not guilty, when the show explicitly shows and tells you what happens through Jamie’s character, demeanour and interactions in the interrogations.

Misogyny and the forces that uphold it are not new concepts and nor will it be an ancient concept any time soon with the way contemporary society functions. Even as society may become more tolerant, there will always be a way for women to be disadvantaged. However, stories like Adolescence may provide a glimmer of hope in dissecting and being a piece of the puzzle that pieces together the wider branches of misogyny and allow for more people to explore its underpinnings.

Britain’s new relationship with America…Some thoughts

Within the coming weeks, Keir Starmer is due to meet Donald Trump and in doing so has offered an interesting view into the complexities of managing diplomacy in the modern age. Whilst the UK and US work collaboratively through bi-lateral trade agreements, and national security collaborations, the change in power structures within the UK and USA marks significant ideological difference that can arguably present a myriad of implications for both countries and for those countries who are implicated by these relations between Britain and America. In this blog, I will outline some of the factors that ought to be considered as we fast-approach this new age of international relations.

It can be understood that Starmer meeting Trump, despite some ideological difference is rooted in a pragmatic diplomacy approach and for what some might say is for the greater good. In an age of continual risk and uncertainty, allyship across nations has seldom been more necessary nor consolidated. On addressing issues including climate change, national security, trade agreements within a post-Brexit adversity, the relationship between America and Britain I sense is being foregrounded by Starmer’s Labour Government.

Moreover, I consider that Starmer should tread carefully and not appear globally as though he is too strongly aligned with Trump’s policies, especially on foreign policy. This mistake was once made by Tony Blair, following the New Beginnings movement after 9/11. It is essential that whilst we maintain good relations with America, this does not come at a cost to our own sovereignty and influence on global issues. I see here an opportunity for Starmer to re-build Britain’s place on the global stage. Despite this as what some strategists might call a ‘bigger picture’, it goes without saying that Starmer may face backlash from his peers based on his willingness to enter a liaison with Trump’s Government. For many inside and outside of the Labour Party, the politics of Trump are considered dangerous, regressive, and ideologically dumbfounded. I happen to agree with much of these sentiments, and I think there is a risk for Starmer… that will later develop into a dilemma. This dilemma will be between appeasing the party majority and those who hold traditional Labour values in place of moving further into the clutches of the far right, emboldened by neoliberalism. It is no secret however that the Labour party has entered a dangerous liaison with neoliberalism and has alienated many traditional Labour voters and has offered no real political alternative.

Considering this, I sense an apprehension is in the air regarding Starmer’s relationship building with America and Donald Trump, that some might argue might be more counter-productive than good. Starmer must demonstrate political pragmatism and arguably the impact of this government and the governments to come will weigh on these relations… Albeit time will tell in determining how these future relations are mapped out.

By whose standards?

This blog post takes inspiration from the recent work of Jason Warr, titled ‘Whitening Black Men: Narrative Labour and the Scriptural Economics of Risk and Rehabilitation,’ published in September 2023. In this article, Warr sheds light on the experiences of young Black men incarcerated in prisons and their navigation through the criminal justice system’s agencies. He makes a compelling argument that the evaluation and judgment of these young Black individuals are filtered through a lens of “Whiteness,” and an unfair system that perceives Black ideations as somewhat negative.

In his careful analysis, Warr contends that Black men in prisons are expected to conform to rules and norms that he characterises as representing a ‘White space.’ This expectation of adherence to predominantly White cultural standards not only impacts the effectiveness of rehabilitation programmes but also fails to consider the distinct cultural nuances of Blackness. With eloquence, Warr (2023, p. 1094) reminds us that ‘there is an inherent ‘whiteness’ in behavioural expectations interwoven with conceptions of rehabilitation built into ‘treatment programmes’ delivered in prisons in the West’.

Of course, the expectation of adhering to predominantly White cultural norms transcends the prison system and permeates numerous other societal institutions. I recall a former colleague who conducted doctoral research in social care, asserting that Black parents are often expected to raise and discipline their children through a ‘White’ lens that fails to resonate with their lived experiences. Similarly, in the realm of music, prior to the mainstream acceptance of hip-hop, Black rappers frequently voiced their struggles for recognition and validation within the industry due similar reasons. This phenomenon extends to award ceremonies for Black actors and entertainers as well. In fact, the enduring attainment gap among Black students is a manifestation of this issue, where some students find themselves unfairly judged for not innately meeting standards set by a select few individuals. Consequently, the significant contributions of Black communities across various domains – including fashion, science and technology, workplaces, education, arts, etc – are sometimes dismissed as substandard or lacking in quality.

The standards I’m questioning in this blog are not solely those shaped by a ‘White’ cultural lens but also those determined by small groups within society. Across various spheres of life, whether in broader society or professional settings, we frequently encounter phrases like “industry best practices,” “societal norms,” or “professional standards” used to dictate how things should be done.

However, it’s crucial to pause and ask:

By whose standards are these determined?

And are they truly representative of the most inclusive and equitable practices?

This is not to say we should discard all concepts of cultural traditions or ‘best practices’. But we need to critically examine the forces that establish standards that we are sometimes forced to follow. Not only do we need to examine them, we must also be willing to evolve them when necessary to be more equitable and inclusive of our full societal diversity.

Minority groups (by minority groups here, I include minorities in race, class, and gender) face unreasonably high barriers to success and recognition – where standards are determined only by a small group – inevitably representing their own identity, beliefs and values.

So in my opinion, rather than defaulting to de facto norms and standards set by a privileged few, we should proactively construct standards that blend the best wisdom from all groups and uplift underrepresented voices – and I mean standards that truly work for everyone.

References

Warr, J. (2023). Whitening Black Men: Narrative Labour and the Scriptural Economics of Risk and Rehabilitation, The British Journal of Criminology, Volume 63, Issue 5, Pages 1091–1107, https://doi.org/10.1093/bjc/azac066

Is Easter relevant?

What if I was to ask you what images will you conjure regarding Easter? For many pictures of yellow chicks, ducklings, bunnies, and colourful eggs! This sounds like a celebration of the rebirth of nature, nothing too religious. As for the hot cross buns, these come to our local stores across the year. The calendar marks it as a spring break without any significant reference to the religion that underpins the origin of the holiday. Easter is a moving celebration that observers the lunar calendar like other religious festivals dictated by the equinox of spring and the first full moon. It replaces previous Greco-Roman holidays, and it takes its influence for the Hebrew Passover. For those who regard themselves as Christians, the message Easter encapsulates is part of their pillars of faith. The main message is that Jesus, the son of God, was arrested for sedition and blasphemy, went through two types of trials representing two different forms of justice; a secular and a religious court which found him guilty. He was convicted of all charges, sentenced to death, and executed the day after sentencing. This was exceptional speed for a justice system that many countries will envy. By all accounts, this man who claimed to be king and divine became a convicted felon put to death for his crimes. The Christian message focuses primarily on what happened next. Allegedly the body of the dead man is placed in a sealed grave only to be resurrected (return from the death, body and spirit) roamed the earth for about 40 days until he ascended into heavens with the promise to be back in the second coming. Christian scholars have been spending time and hours discussing the representation of this miracle. The central core of Christianity is the victory of life over death. The official line is quite remarkable and provided Christians with an opportunity to admire their head of their church.

What if this was not really the most important message in the story? What if the focus was not on the resurrection but on human suffering. The night before his arrest Jesus, according to the New Testament will ask “Father, if thou be willing, remove this cup from me” in a last attempt to avoid the humiliation and torture that was to come. In Criminology, we recognise in people’s actions free will, and as such, in a momentary lapse of judgment, this man will seek to avoid what is to come. The forthcoming arrest after being identified with a kiss (most unique line-up in history) will be followed by torture. This form of judicial torture is described in grim detail in the scriptures and provides a contrast to the triumph of the end with the resurrection. Theologically, this makes good sense, but it does not relate to the collective human experience. Legal systems across time have been used to judge and to punish people according to their deeds. Human suffering in punishment seems to be centred on bringing back balance to the harm incurred by the crime committed. Then there are those who serve as an example of those who take the punishment, not because they accept their actions are wrong, but because their convictions are those that rise above the legal frameworks of their time. When Socrates was condemned to death, his students came to rescue him, but he insisted on ingesting the poison. His action was not of the crime but of the nature of the society he envisaged. When Jesus is met with the guard in the garden of Gethsemane, he could have left in the dark of the night, but he stays on. These criminals challenge the orthodoxy of legal rights and, most importantly, our perception that all crimes are bad, and criminals deserve punishment.

Bunnies are nice and for some even cuddly creatures, eggs can be colourful and delicious, especially if made of chocolate, but they do not contain that most important criminological message of the day. Convictions and principles for those who have them, may bring them to clash with authorities, they may even be regarded as criminals but every now and then they set some new standards of where we wish to travel in our human journey. So, to answer my own question, religiosity and different faiths come and go, but values remain to remind us that we have more in common than in opposition.

What’s happened to the Pandora papers?

Sometime last week, I was amid a group of friends when the argument about the Pandora papers suddenly came up. In brief, the key questions raised were how come no one is talking about the Pandora papers again? What has happened to the investigations, and how come the story has now been relegated to the back seat within the media space? Although, we didn’t have enough time to debate the issues, I promised that I would be sharing my thoughts on this blog. So, I hope they are reading.

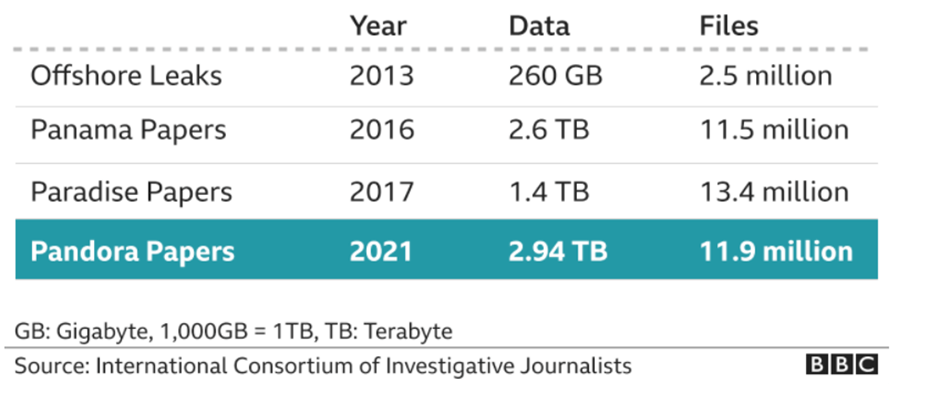

We can all agree that for many years, the issues of financial delinquencies and malfeasants have remained one of the major problems facing many societies. We have seen situations where Kleptocratic rulers and their associates loot and siphon state resources, and then stack them up in secret havens. Some of these Kleptocrats prefer to collect luxury Italian wines and French arts with their ill-gotten wealth, while others prefer to purchase luxury properties and 5-star apartments in Dubai, London and elsewhere. We find military generals participating in financial black operations, and we hear about law makers manipulating the gaps in the same laws they have created. In fact, in some spheres, we find ‘business tycoons’ exploiting violence-torn regions to smuggle gold, while in other spheres, some appointed public officers refuse to declare their assets because of fear of the future. Two years ago, we read about the two socialist presidents of the southern Spanish region and how they were found guilty of misuse of public funds. Totaling about €680m, you can imagine the good that could have been achieved in that region. We should also not forget the case of Ferdinand Marcos and his wife, both of whom (we are told) amassed over $10 billion during their reign in the Philippines. As we can see below that from the offshore leak of 2013 to the Panama papers of 2016 and then the 2017 Paradise papers, data leaks have continued to skyrocket. This simply demonstrates the level to which politicians and other official state representatives are taking to invest in this booming industry.

These stories are nothing new, we have always read about them – but then they fade away quicker than we expect. It is important to note that while some countries are swift in conducting investigation when issues like these arise, very little is known about others. So, in this blog, I will simply be highlighting some of the reasons why I think news relating to these issues have a short life span.

To start with, the system of financial corruption is often controlled and executed by those holding on to power very firmly. The firepower of their legal defence team is usually unmatchable, and the way they utilise their wealth and connections often make it incredibly difficult to tackle. For example, when leaks like these appear, some journalists are usually mindful of making certain remarks about the situation for the avoidance of being sued for libel and defamation of character. Secondly, financial crimes are always complex to investigate, and prosecution often takes forever. The problem of plurality in jurisdiction is also important in this analysis as it sometimes slows down the processes of investigation and prosecution. In some countries, there is something called ‘the immunity clause’, where certain state representatives are protected from being arraigned while in office. This issue has continued to raise concerns about the position of truth, power, and political will of governments to fight corruption. Another issue to consider is the issue of confidentiality clause, or what many call corporate secrecy in offshore firms. These policies make it very difficult to know who owns what or who is purchasing what. So, for as long as these clauses remain, news relating to these issues may continue to fade out faster than we imagine. Perhaps Young (2012) was right in her analysis of illicit practices in banking & other offshore financial centres when she insisted that ‘offshore financial centers such as the Cayman Islands, often labelled secrecy jurisdictions, frustrate attempts to recover criminal wealth because they provide strong confidentiality in international finance to legitimate clients as well as to the crooks and criminals who wish to hide information – thereby attracting a large and varied client base with their own and varied reasons for wanting an offshore account’, (Young 2012, 136). This idea has also been raised by our leader, Nikos Passas who believe that effective transparency is an essential component of unscrambling the illicit partnerships in these structures.

While all these dirty behaviours have continued to damage our social systems, they yet again remind us how the network of greed remains at the core centre of human injustice. I found the animalist commandant of the pigs in the novel Animal Farm, by George Orwell to be quite relevant in this circumstance. The decree spells: all animals are equal, but some animals are more equal than others. This idea rightly describes the hypocrisy that we find in modern democracies; where citizens are made to believe that everyone is equal before the law but when in fact the law, (and in many instances more privileges) are often tilted in favour of the elites.

I agree with the prescription given by President Obama who once said that strengthening democracy entails building strong institutions over strong men. This is true because the absence of strong institutions will only continue to pave way for powerful groups to explore the limits of democracy. This also means that there must be strong political will to sanction these powerful groups engaging in this ‘thievocracy’. I know that political will is often used too loosely these days, but what I am inferring here is genuine determination to prosecute powerful criminals with transparency. This also suggests the need for better stability and stronger coordination of law across jurisdictions. Transparency should not only be limited to governments in societies, but also in those havens. It is also important to note that tackling financial crimes of the powerful should not be the duty of the state alone, but of all. Simply, it should be a collective effort of all, and it must require a joint action. By joint action I mean that civil societies and other private sectors must come together to advocate for stronger sanctions. We must seek collective participation in social movements because such actions can bring about social change – particularly when the democratic processes are proving unable to tackle such issues. Research institutes and academics must do their best by engaging in research to understand the depth of these problems as well as proffering possible solutions. Illicit financial delinquencies, we know, thrive when societies trivialize the extent and depth of its problem. Therefore, the media must continue to do their best in identifying these problems, just as we have consistently seen with the works of the International Consortium of Investigative Journalists and a few others. So, in a nutshell and to answer my friends, part of the reasons why issues like this often fade away quicker than expected has to do with some of the issues that I have pointed out. It is hoped however that those engaged in this incessant accretion of wealth will be confronted rather than conferred with national honors by their friends.

References

BBC (2021) Pandora Papers: A simple guide to the Pandora Papers leak. Available at: https://www.bbc.co.uk/news/world-58780561 (Accessed: 26 May 2022)

Young, M.A., 2012. Banking secrecy and offshore financial centres: money laundering and offshore banking, Routledge

DIE in Solidarity with Diversity-Inclusion-Equality

As an associate lecturer on a casual contract, I was glad to stand in solidarity with my friends and colleagues also striking as part of UCU Industrial Action. Concurrently, I was also glad to stand in solidarity with students (as a recent former undergrad and masters student … I get it), students who simply want a better education, including having a curriculum that represents them (not a privileged minority). I wrote this poem for the students and staff taking part in strike action, and it comes inspired from the lip service universities give to doing equality while undermining those that actually do it (meanwhile universities refuse to put in the investment required). This piece also comes inspired by ‘This is Not a Humanising Poem’ by Suhaiymah Manzoor-Khan, a British author-educator from Bradford in Yorkshire.

Some issues force you to protest

the way oppression knocks on your front door

and you can’t block out the noise

“protest peacefully, non-violently”

I have heard people say

show ‘the undecided’, passive respectability

be quiet, leave parts of yourself at home

show them you’re just as capable of being liked

enough for promotion into the canteen,

protest with kindness and humour

make allusions to smiling resisters in literature

they’d rather passive images of Rosa Parks all honestly

but not her politics against racism, patriarchy, and misogyny

but I wanna tell them about British histories of dissent

the good and the bad – 1919 Race Riots

the 1926 general strikes, and the not so quiet

interwar years of Caribbean resistance to military conscription

I wanna talk about how Pride was originally a protest

I wanna talk about the Grunwick Strike and Jayaben Desai

and the Yorkshire miners that came to London in solidarity

with South Asian migrant women in what was 1980s austerity

I want to rant about Thatcherism as the base

for the neoliberal university culture we work in today

I want to talk about the Poll Tax Riots of 1990

and the current whitewashing of the climate emergency

they want protesters to be frugal in activism,

don’t decolonise the curriculum

they say decolonise

they mean monetise, let’s diversify …

but not that sort of diversity

nothing too political, critical, intellectual

transform lives, inspire change?

But no,

they will make problems out of people who complain

it’s your fault, for not being able to concentrate

in workplaces that separate the work you do

from the effects of Black Lives Matter and #MeToo

they make you the problem

they make you want to leave

unwilling to acknowledge that universities

discriminate against staff and students systemically

POCs, working-class, international, disabled, LGBT

but let’s show the eligibility of staff networks

while senior leaders disproportionately hire TERFs

staff and students chequered with severe floggings

body maps of indenture and slavery

like hieroglyphics made of flesh

but good degrees, are not the only thing that hold meaning

workers rights, students’ rights to education

so this will not be a ‘people are human’ poem

we are beyond respectability now

however, you know universities will DIE on that hill

instead,

treat us well when we’re tired

productive, upset, frustrated

when we’re in back-to-back global crises

COVID-19, Black Lives Matter, femicide,

failing in class, time wasting, without the right visas,

the right accents; Black, white, homeless, in poverty,

women, trans, when we’re not A-Grade students, when we don’t

have the right last name; when we’re suicidal

when people are anxious, depressed, autistic

tick-box statistics within unprotected characteristics

all permeates through workers’ and student rights

When you see staff on strike now,

we’re protesting things related to jobs yes,

but also, the after-effects

as institutions always protect themselves

so sometimes I think about

when senior management vote on policies…

if there’s a difference between the nice ones ticking boxes

and the other ones that scatter white supremacy?

I wonder if it’s about diversity, inclusion, and equality [DIE],

how come they discriminate in the name of transforming lives

how come Black students are questioned (under caution) in disciplinaries

like this is the London Met maintaining law and order …

upholding canteen cultures of policing

Black and Brown bodies. Decolonisation is more

than the curriculum; Tuck and Yang

tell us decolonisation is not a metaphor,

so why is it used in meetings as lip service –

why aren’t staff hired in

in critical race studies, whiteness studies, decolonial studies

why is liberation politics and anti-racism not at the heart of this

why are mediocre white men failing upwards,

they tell me we have misunderstood

but promotion based on merit doesn’t exist

bell hooks called this

imperialist heteropatriarchal white supremacy

you know Free Palestine, Black Lives Matter, and the rest

we must protest how we want to protest

we must never be silenced; is this being me radical, am I radical

Cos I’m tired of being called a “millennial lefty snowflake”, when I’m just trying not to DIE?!

Further Reading

Ahmed, Sara (2012) On Being Included: Racism and Diversity in Institutional Life. London: Duke.

Ahmed, Sara (2021) Complaint. London: Duke.

Bhanot, Kavita (2015) Decolonise, Not Diversify. Media Diversified [online].

Double Down News (2021) This Is England: Ash Sakar’s Alternative Race Report. YouTube.

Chen, Sophia (2020) The Equity-Diversity-Inclusion Industrial Complex Gets a Makeover. Wired [online].

Puwar, Nirmal (2004) Space Invaders: Race, Gender and Bodies Out of Place. Oxford: Berg.

Read, Bridget (2021) Doing the Work at Work What are companies desperate for diversity consultants actually buying? The Cut [online].

Ventour, Tré (2021) Telling it Like it is: Decolonisation is Not Diversity. Diverse Educators [online].

The pathology of performance management: obscuration, manipulation and power

My colleague @manosdaskalou’s recent blog Do we have to care prompted me to think about how data is used to inform government, its agencies and other organisations. This in turn led me back to the ideas of New Public Management (NPM), later to morph into what some authors called Administrative Management. For some of you that have read about NPM and its various iterations and for those of you that have lived through it, you will know that the success or failure of organisations was seen through a lens of objectives, targets and performance indicators or Key Performance Indicators (KPIs). In the early 1980s and for a decade or so thereafter, Vision statements, Mission statements, objectives, targets, KPI’s and league tables, both formal and informal became the new lingua franca for public sector bodies, alongside terms such as ‘thinking outside the box’ or ‘blue sky thinking’. Added to this was the media frenzy when data was released showing how organisations were somehow failing.

Policing was a little late joining the party, predominately as many an author has suggested, for political reasons which had something to do with neutering the unions; considered a threat to right wing capitalist ideologies. But policing could not avoid the evidence provided by the data. In the late 1980s and beyond, crime was inexorably on the rise and significant increases in police funding didn’t seem to stem the tide. Any self-respecting criminologist will tell you that the link between crime and policing is tenuous at best. But when politicians decide that there is a link and the police state there definitely is, demonstrated by the misleading and at best naïve mantra, give us more resources and we will control crime, then it is little wonder that the police were made to fall in line with every other public sector body, adopting NPM as the nirvana.

Since crime is so vaguely linked to policing, it was little wonder that the police managed to fail to meet targets on almost every level. At one stage there were over 400 KPIs from Her Majesty’s Inspectorate of Constabulary, let alone the rest imposed by government and the now defunct Audit Commission. This resulted in what was described as an audit explosion, a whole industry around collecting, manipulating and publishing data. Chief Constables were held to account for the poor performance and in some cases chief officers started to adopt styles of management akin to COMPSTAT, a tactic born in the New York police department, alongside the much vaunted ‘zero tolerance policing’ style. At first both were seen as progressive. Later, it became clear that COMPSTAT was just another way of bullying in the workplace and zero tolerance policing was totally out of kilter with the ethos of policing in England and Wales, but it certainly left an indelible mark.

As chief officers pushed the responsibility for meeting targets downwards through so called Performance and Development Reviews (PDRs), managers at all levels became somewhat creative with the crime figures and manipulating the rules around how crime is both recorded and detected. This working practice was pushed further down the line so that officers on the front line failed to record crime and became more interested in how to increase their own detection rates by choosing to pick what became known in academic circles as’ low hanging fruit’. Easy detections, usually associated with minor crime such as possession of cannabis, and inevitably to the detriment of young people and minority ethnic groups. How else do you produce what is required when you have so little impact on the real problem? Nobody, perhaps save for some enlightened academics, could see what the problem was. If you aren’t too sure let me spell it out, the police were never going to produce pleasing statistics because there was too much about the crime phenomenon that was outside of their control. The only way to do so was to cheat. To borrow a phrase from a recent Inquiry into policing, this was quite simply ‘institutional corruption’.

In the late 1990s the bubble began to burst to some extent. A series of inquiries and inspections showed that the police were manipulating data; queue another media frenzy. The National Crime Recording Standard came to fruition and with it another audit explosion. The auditing stopped and the manipulation increased, old habits die hard, so the auditing started again. In the meantime, the media and politicians and all those that mattered (at least that’s what they think) used crime data and criminal justice statistics as if they were somehow a spotlight on what was really happening. So, accurate when you want to show that the criminal justice system is failing but grossly inaccurate when you can show the data is being manipulated. For the media, they got their cake and were scoffing on it.

But it isn’t just about the data being accurate, it is also about it being politically acceptable at both the macro and micro level. The data at the macro level is very often somehow divorced from the micro. For example, in order for the police to record and carry out enquiries to detect a crime there needs to be sufficient resources to enable officers to attend a reported crime incident in a timely manner. In one police force, previous work around how many officers were required to respond to incidents in any given 24-hour period was carefully researched, triangulating various sources of data. This resulted in a formula that provided the optimum number of officers required, taking into account officers training, days off, sickness, briefings, paperwork and enquiries. It considered volumes and seriousness of incidents at various periods of time and the number of officers required for each incident. It also considered redundant time, that is time that officers are engaged in activities that are not directly related to attending incidents. For example, time to load up and get the patrol car ready for patrol, time to go to the toilet, time to get a drink, time to answer emails and a myriad of other necessary human activities. The end result was that the formula indicated that nearly double the number of officers were required than were available. It really couldn’t have come as any surprise to senior management as the force struggled to attend incidents in a timely fashion on a daily basis. The dilemma though was there was no funding for those additional officers, so the solution, change the formula and obscure and manipulate the data.

With data, it seems, comes power. It doesn’t matter how good the data is, all that matters is that it can be used pejoratively. Politicians can hold organisations to account through the use of data. Managers in organisations can hold their employees to account through the use of data. And those of us that are being held to account, are either told we are failing or made to feel like we are. I think a colleague of mine would call this ‘institutional violence’. How accurate the data is, or what it tells you, or more to the point doesn’t, is irrelevant, it is the power that is derived from the data that matters. The underlying issues and problems that have a significant contribution to the so called ‘poor performance’ are obscured by manipulation of data and facts. How else would managers hold you to account without that data? And whilst you may point to so many other factors that contribute to the data, it is after all just seen as an excuse. Such is the power of the data that if you are not performing badly, you still feel like you are.

The above account is predominantly about policing because that is my background. I was fortunate that I became far more informed about NPM and the unintended consequences of the performance culture and over reliance on data due to my academic endeavours in the latter part of my policing career. Academia it seemed to me, had seen through this nonsense and academics were writing about it. But it seems, somewhat disappointingly, that the very same managerialist ideals and practices pervade academia. You really would have thought they’d know better.

The Case of Mr Frederick Park and Mr Ernest Boulton

As a twenty-first century cis woman, I cannot directly identify with the people detailed below. However, I feel it important to mark LGBT+ History Month, recognising that so much history has been lost. This is detrimental to society’s understanding and hides the contribution that so many individuals have made to British and indeed, world history. What follows was the basis of a lecture I first delivered in the module CRI1006 True Crimes and Other Fictions but its roots are little longer

Some years ago I bought a very dear friend tickets for us to go and see a play in London (after almost a year of lockdowns, it seems very strange to write about the theatre).. I’d read a review of the play in The Guardian and both the production and the setting sounded very interesting. As a fan of Oscar Wilde’s writing, particularly The Ballad of Reading Gaol and De Profundis (both particularly suited to criminological tastes) and a long held fascination with Polari, the play sounded appealing. Nothing particularly unusual on the surface, but the experience, the play and the actors we watched that evening, were extraordinary. The play is entitled Fanny and Stella: The SHOCKING True Story and the theatre, Above the Stag in Vauxhall, London. Self-described as The UK’s LGBTQIA+ theatre, Above the Stag is often described as an intimate setting. Little did we know how intimate the setting would be. It’s a beautiful, tiny space, where the actors are close enough to just reach out and touch. All of the action (and the singing) happen right before your eyes. Believe me, with songs like Sodomy on the Strand and Where Has My Fanny Gone there is plenty to enjoy. If you ever get the opportunity to go to this theatre, for this play, or any other, grab the opportunity.

So who were Fanny and Stella? Christened Frederick Park (1848-1881) and Ernest Boulton (1848-1901), their early lives are largely undocumented beyond the very basics. Park’s father was a judge, Boulton, the son of a stockbroker. As perhaps was usual for the time, both sons followed their respective fathers into similar trades, Park training as an articled clerk, Boulton, working as a trainee bank clerk. In addition, both were employed to act within music halls and theatres. So far nothing extraordinary….

But on the 29 April 1870 as Fanny and Stella left the Strand Theatre they were accosted by undercover police officers;

‘“I’m a police officer from Bow Street […] and I have every reason to believe that you are men in female attire and you will have to come to Bow Street with me now”’

(no reference, cited in McKenna, 2013: 7)

Upon arrest, both Fanny and Stella told the police officers that they were men and at the police station they provided their full names and addresses. They were then stripped naked, making it obvious to the onlooking officers that both Fanny and Stella were (physically typical) males. By now, the police had all the evidence they needed to support the claims made at the point of arrest. However, they were not satisfied and proceeded to submit the men to a physically violent examination designed to identify if the men had engaged in anal sex. This was in order to charge both Fanny and Stella with the offence of buggery (also known as sodomy). The charges when they came, were as follows:

‘they did with each and one another feloniously commit the abominable crime of buggery’

‘they did unlawfully conspire together , and with divers other persons, feloniously, to commit the said crimes’

‘they did unlawfully conspire together , and with divers other persons, to induce and incite other persons, feloniously, to commit the said crimes’

‘they being men, did unlawfully conspire together, and with divers others, to disguise themselves as women and to frequent places of public resort, so disguised, and to thereby openly and scandalously outrage public decency and corrupt public morals’

Trial transcript cited in McKenna (2013: 35)

It is worth noting that until 1861 the penalty for being found guilty of buggery was death. After 1861 the penalty changed to penal servitude with hard labour for life.

You’ll be delighted to know, I am not going to give any spoilers, you need to read the book or even better, see the play. But I think it is important to consider the many complex facets of telling stories from the past, including public/private lives, the ethics of writing about the dead, the importance of doing justice to the narrative, whilst also shining a light on to hidden communities, social histories and “ordinary” people. Fanny and Stella’s lives were firmly set in the 19th century, a time when photography was a very expensive and stylised art, when social media was not even a twinkle in the eye. Thus their lives, like so many others throughout history, were primarily expected to be private, notwithstanding their theatrical performances. Furthermore, sexual activity, even today, is generally a private matter and there (thankfully) seems to be no evidence of a Victorian equivalent of the “dick pic”! Sexual activity, sexual thoughts, sexuality and so on are generally private and even when shared, kept between a select group of people.

This means that authors working on historical sexual cases, such as that of Fanny and Stella, are left with very partial evidence. Furthermore, the evidence which exists is institutionally acquired, that is we only know their story through the ignominy of their criminal justice records. We know nothing of their private thoughts, we have no idea of their sexual preferences or fantasies. Certainly, the term ‘homosexual’ did not emerge until the late 1860s in Germany, so it is unlikely they would have used that language to describe themselves. Likewise, the terms transvestite, transsexual and transgender did not appear until 1910s, 1940s and 1960s respectively so Fanny and Stella could not use any of these as descriptors. Despite the blue plaque above, we have no evidence to suggest that they ever described themselves as ‘cross-dressers’ In short, we have no idea how either Fanny or Stella perceived of themselves or how they constructed their individual life stories. Instead, authors such as Neil McKenna, close the gaps in order to create a seamless narrative.

McKenna calls upon an excellent range of different archival material for his book (upon which the play is based). These include:

- National Archives in Kew

- British Library/British Newspaper Library, London

- Metropolitan Police Service Archive, London

- Wellcome Institute, London

- Parliamentary Archives, London

- Libraries of the Royal Colleges of Surgeons, London and Edinburgh

- National Archives of Scotland

Nevertheless, these archives do not contain the level of personal detail, required to tell a fascinating story. Instead the author draws upon his own knowledge and understanding to bring these characters to life. Of course, no author writes in a vacuum and we all have a standpoint which impacts on the way in which we understand the world. So whilst, we know the institutional version of some part of Fanny and Stella’s life, we can never know their inner most thoughts or how they thought of themselves and each other. Any decision to include content which is not supported by evidence is fraught with difficulty and runs the risk of exaggeration or misinterpretation. A constant reminder that the two at the centre of the case are dead and justice needs to be done to a narrative where there is no right of response.

It is clear that both the book and the play contain elements that we cannot be certain are reflective of Fanny and Stella’s lives or the world they moved in. The alternative is to allow their story to be left unknown or only told through police and court records. Both would be a huge shame. As long as we remember that their story is one of fragile human beings, with many strengths and frailties, narratives such as this allow us a brief glimpse into a hidden community and two, not so ordinary people. But we also need to bear in mind that in this case, as with Oscar Wilde, the focus is on the flamboyantly illicit and tells us little about the lived experience of some many others whose voices and experiences are lost in time..

References

McKenna, Neil, (2013), Fanny and Stella: The Young Men Who Shocked Victorian England, (London: Faber and Faber Ltd.) Norton, Rictor, (2005), ‘Recovering Gay History from the Old Bailey,’ The London Journal, 30, 1: 39-54 Old Bailey Online, (2003-2018), ‘The Proceedings of the Old Bailey,’ The Old Baily Online, [online]. Available from: https://www.oldbaileyonline.org/ [Last accessed 25 February 2021]